Search engine indexing is necessary for improving your website’s SEO.

This article explains how search engines crawl and index content, the importance of backlink indexing, the differences between manual and automated solutions, and the role of technical SEO.

It also covers mobile-first indexing, the use of XML sitemaps, and strategies to monitor and optimize your indexing performance.

What is search engine indexing and how does it impact SEO?

Search engine indexing is the systematic process where search engines collect, analyze, and store web content in specialized databases for rapid information retrieval. This foundational process determines how effectively search engines can discover and serve your content to users.

When content is properly indexed, it becomes eligible to appear in search results and compete for rankings. Poor indexing can prevent even high-quality content from being found, while optimized indexing increases visibility and ranking potential.

How do search engines process and store web content?

Search engines process and store web content through specialized data structures called inverted indices that enable fast information retrieval.

The process starts with web crawlers discovering URLs and analyzing page content through tokenization, which breaks content into individual searchable elements.

These elements undergo parsing to extract meaningful data including text, metadata, and media information.

The processed data is then organized using advanced storage techniques:

| Storage component | Purpose | Impact on search |

|---|---|---|

| Inverted index | Maps terms to documents | Enables millisecond retrieval |

| Forward index | Lists words per document | Supports content analysis |

| Document-term matrix | Tracks term relationships | Powers relevancy scoring |

| Citation index | Records link relationships | Influences authority metrics |

Why is indexing essential for search engine rankings?

Indexing is essential for search engine rankings because it creates the foundation for content visibility and evaluation in search results.

Without proper indexing, search engines cannot include your content in their results pages, regardless of its quality or relevance. Effective indexing provides these key benefits:

- Enables content discovery and inclusion in search results

- Facilitates accurate relevancy assessment

- Supports proper evaluation of content quality

- Allows tracking of content updates

- Enables relationship mapping between pages

- Powers quick retrieval during searches

- Supports advanced ranking algorithms

What are the key components of the indexing process?

The key components of the indexing process form an interconnected system that transforms raw web content into searchable data.

Each component serves a specific function:

| Category | Features |

|---|---|

| Content discovery | URL identification and validation: Identifies new URLs and checks if they’re valid for indexing. |

| Robots.txt interpretation: Reads website rules to know which pages can be crawled. | |

| XML sitemap processing: Processes sitemaps to prioritize and locate pages efficiently. | |

| Link relationship mapping: Tracks internal and external links to build site structure. | |

| Processing pipeline | HTML parsing and rendering: Converts HTML code to readable content for search engines. |

| JavaScript execution: Runs JavaScript to capture dynamic page content. | |

| Content extraction: Extracts text, images, and other media for analysis. | |

| Language detection: Identifies the primary language of the content. | |

| Media processing: Processes images, videos, and other media types. | |

| Schema markup analysis: Reads structured data to understand page context and elements. | |

| Data organization | Inverted index creation: Builds an index to quickly locate terms in documents. |

| Document classification: Categorizes pages based on topics or content type. | |

| Entity recognition: Identifies key entities, such as names and places, in content. | |

| Relationship mapping: Maps connections between pages, topics, and entities. | |

| Authority scoring: Evaluates content authority to impact search ranking. | |

| Technical infrastructure | Database optimization: Enhances data storage for quick access and minimal space. |

| Cache management: Temporarily stores frequently used data to speed up retrieval. | |

| Query processing: Handles search requests to provide relevant results fast. | |

| Load balancing: Distributes processing load across multiple servers. | |

| Fault tolerance: Ensures search functions continue even with system issues. |

How do search engines discover and crawl web content?

Search engines rely on sophisticated web crawlers to systematically discover, analyze, and index online content. These automated programs, also known as spiders or bots, methodically explore websites by following links and processing page content.

The discovery and crawling process forms the foundation for effective search engine indexing and directly impacts how quickly your backlinks get noticed and valued.

What are the primary methods of content discovery?

Content discovery happens through six main channels that search engines use to find and process new web pages. The primary methods include following internal and external links, processing XML sitemaps, and accepting direct URL submissions through webmaster tools.

Each discovery method contributes uniquely to how search engines build their index and understand website relationships.

Key discovery methods include:

- Internal links: Guide users and search engines through the site, creating a structured navigation path for efficient crawling.

- External backlinks: Act as endorsements from other sites, enhancing authority and improving search engine rankings.

- XML sitemaps: Contain a list of URLs on a site, facilitating faster and more comprehensive discovery by search engines.

- Direct submissions: Allow site owners to submit URLs directly to search engines, ensuring immediate indexation and attention.

- RSS feeds: Provide automated content updates for subscribers and search engines, supporting ongoing tracking and visibility.

- Social signals: Indicate the popularity and engagement level of content, aiding in secondary discovery and influence on ranking factors.

How does the crawling process work?

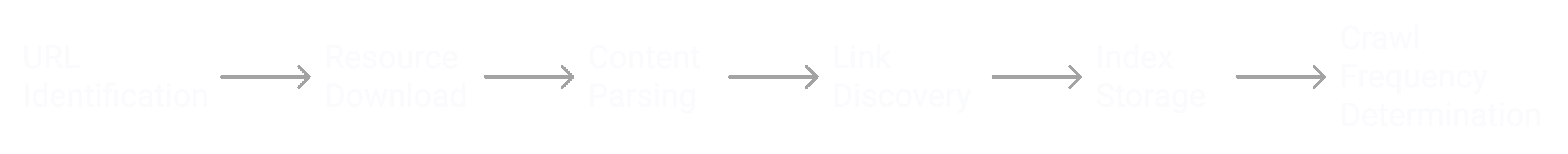

The crawling process operates through a systematic six-step sequence that begins with URL discovery and ends with scheduling future crawls.

Search engine bots download page content, process HTML and other resources, extract meaningful data, and store information in their index.

This automated system runs continuously, with crawlers processing millions of pages daily while following specific protocols and restrictions.

The crawling process steps are as follows:

- Initial URL identification and prioritization occur within the first 0-24 hours

- Resource download and rendering takes about 1-5 seconds per page

- Content parsing and data extraction in milliseconds

- Link discovery and crawl queue updates are continuous process

- Index storage and organization in real-time

- Crawl frequency is determined based on page authority

What factors influence the crawl budget?

Crawl budget allocation depends on multiple technical and quality factors that determine how often search engines visit your pages. Website authority scores, server performance metrics, and content update patterns significantly impact crawl frequency.

Critical crawl budget factors are:

- A higher domain authority score (0-100) can lead to more crawl resources as it signals relevance and trustworthiness.

- Server response time under 200ms helps pages load quickly, maximizing crawl efficiency.

- Frequent content updates (daily, weekly, or monthly) encourage search engines to visit regularly for fresh content.

- More indexed pages reflect a larger site that may need a higher crawl budget to cover all important pages.

- Proper internal link distribution guides search engines to high-value pages, optimizing crawl allocation.

- High mobile optimization ensures the site is mobile-friendly, supporting mobile-first indexing by search engines.

- Security with HTTPS fosters a trustworthy environment, increasing crawl priority for secure pages.

Why do pages sometimes fail to get crawled?

Pages fail to get crawled because of technical barriers, content quality issues, or intentional crawl restrictions that prevent search engines from accessing or processing content effectively.

Common technical issues include server errors, robots.txt blocks, and broken internal links that create dead ends for crawlers.

Poor site architecture and low-quality content can also lead search engines to deprioritize certain pages.

Common crawling issues include:

| Issue type | Impact level | Solution approach |

|---|---|---|

| Robots.txt blocks | Critical | Review and update directives |

| Server errors | High | Fix 4xx and 5xx responses |

| Broken links | Medium | Regular link audits |

| Duplicate content | Medium | Implement canonical tags |

| Poor internal linking | High | Improve site structure |

| Slow load times | Medium | Optimize performance |

| JavaScript issues | High | Enable proper rendering |

| Incorrect canonicals | Medium | Fix tag implementation |

What is backlink indexing and why should you care about it?

Backlink indexing is the essential process where search engines discover, validate, and store incoming links to your website in their databases. This critical SEO component determines how quickly and effectively your backlinks contribute to your site’s authority and rankings. Properly indexed backlinks can accelerate ranking improvements by up to 57% compared to unindexed links.

How does the process of backlink indexing actually work?

Backlink indexing operates through a systematic process where search engine crawlers discover and process links pointing to your website.

Google typically processes new backlinks within 4-8 days, though this can vary based on multiple factors.

Key indexing stages and timeframes:

| Stage | Duration | Key activities |

|---|---|---|

| Discovery | 1-2 days | Link detection and initial crawl |

| Analysis | 2-3 days | Context evaluation and validation |

| Processing | 1-2 days | Database integration and value assignment |

| Verification | 1 day | Final checks and confirmation |

What problems can slow backlink indexing create for your site?

Slow backlink indexing creates significant ranking delays and diminishes your SEO investment returns by preventing search engines from recognizing valuable link signals.

When backlinks remain unindexed, your website fails to receive timely authority transfers, competitive advantages, and ranking improvements.

The critical impacts of delayed indexing are:

- Ranking improvements occur 40% more slowly, delaying the positive impact of backlinks on search results.

- Gains in competitive advantage are delayed, as search engines take longer to recognize and attribute value from new backlinks.

- Authority accumulation happens at a reduced speed, limiting your site’s potential for growth in search engine trust and relevance.

- Performance measurement remains incomplete, making it difficult to assess the effectiveness of link-building efforts accurately.

How do unindexed backlinks impact your SEO performance?

Unindexed backlinks significantly impair your SEO performance by preventing search engines from recognizing and valuing these important ranking signals.

Until a backlink gets indexed, search engines cannot factor it into their ranking algorithms, essentially making your link building investments temporarily worthless.

Without prompt indexing, search engines cannot factor these backlinks into their algorithms, which delays ranking improvements and can reduce your return on investment (ROI) in link-building efforts.

What are the most common challenges in backlink indexing?

Backlink indexing is critical to SEO success, as indexed backlinks help search engines recognize a website’s authority and relevance.

However, many obstacles can prevent backlinks from being indexed efficiently, slowing down ranking improvements and reducing the value of link-building efforts.

Common indexing barriers include:

| Challenge Category | Specific Issues | Description |

|---|---|---|

| Technical Issues | JavaScript Rendering Failures | Backlinks in JavaScript may be inaccessible to crawlers. |

| Complex Site Architecture | Complicated structures hinder crawlers, impacting backlink indexing. | |

| Crawler Blocking | Misconfigured robots.txt or meta tags can block access to pages. | |

| Content Problems | Low-Quality Content | Backlinks to low-value content may not be prioritized for indexing. |

| Duplicate Content | Links to duplicate pages confuse search engines, causing indexing issues. | |

| Thin Content Pages | Pages with minimal content may be overlooked by search engines. | |

| Resource Constraints | Limited Crawl Budget | Restricted crawl budgets delay backlink indexing. |

| Server Performance Issues | Slow or unreliable servers impede crawlers’ ability to access pages. | |

| Bandwidth Restrictions | Limited bandwidth reduces the number of pages crawled. | |

| Link Implementation | Deep Page Placement | Links buried deep within the site hierarchy may be less accessible. |

| Incorrect Attribute Usage | Misuse of ‘nofollow’ or similar attributes blocks crawlers from following links. | |

| Redirect Chains | Excessive or improper redirects confuse crawlers, causing indexing issues. |

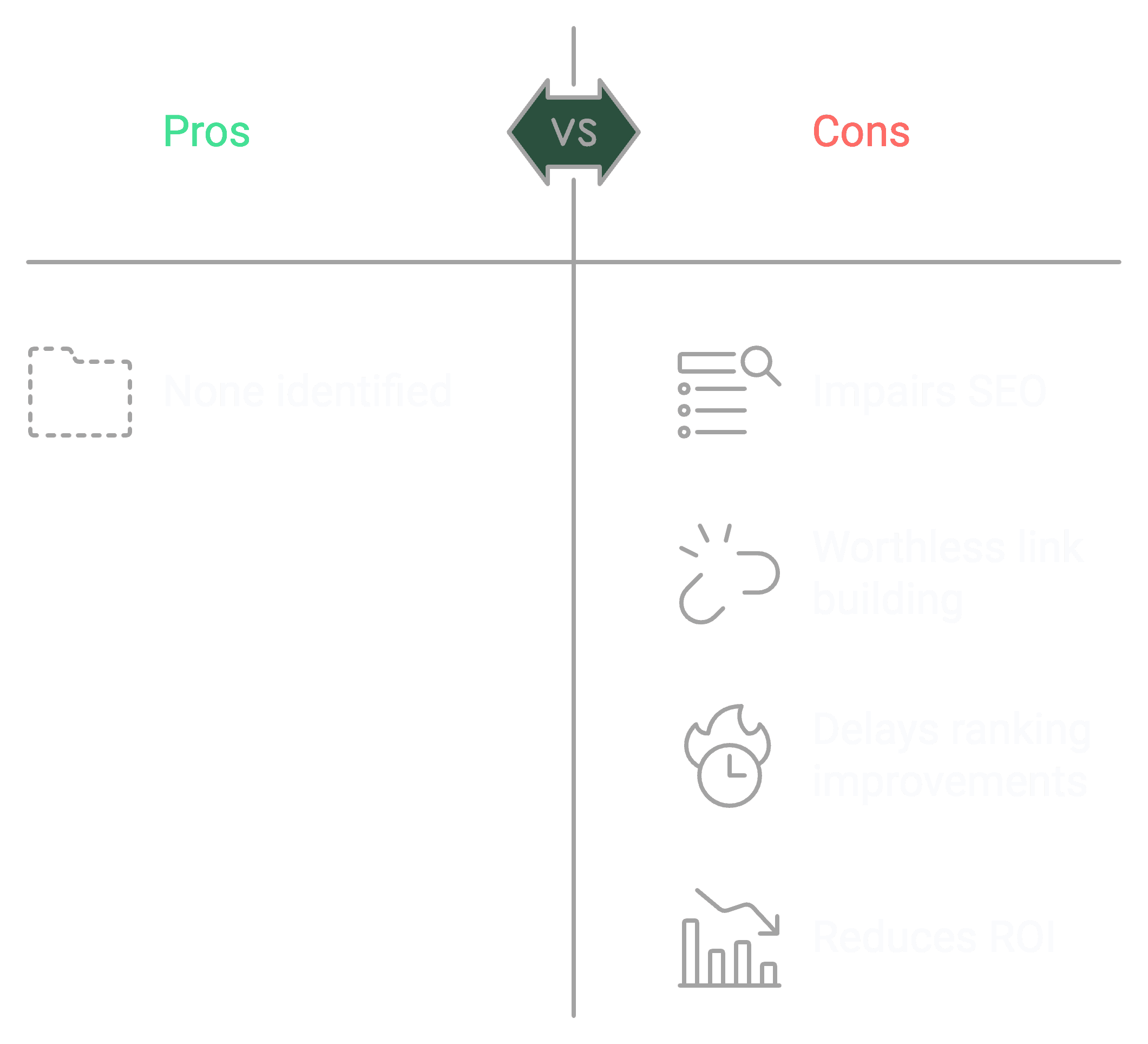

How do manual and automated backlink indexing solutions compare?

Manual and automated backlink indexing solutions represent two distinct approaches to getting backlinks recognized by search engines, with significant differences in efficiency and results.

Manual methods rely on time-intensive human processes that often lead to inconsistent outcomes, while automated solutions like the Backlink Indexing Tool utilize advanced technology to systematically accelerate indexing rates and provide reliable performance metrics.

What limitations will you face with manual indexing methods?

Manual indexing methods demand extensive human intervention, leading to frequent errors and inconsistent outcomes across different backlink submissions.

Key manual indexing limitations are:

- The average indexing success rate is between 30% and 40%, indicating a significant portion of links may remain unindexed.

- Processing time for each batch is typically 4-6 weeks, reflecting the extended duration needed for full indexing.

- Human error rate ranges from 15% to 25%, impacting the efficiency and accuracy of indexing efforts.

- The system can handle a daily submission capacity of 50-100 links, setting a limit on daily throughput.

- Tracking accuracy is estimated between 40% and 60%, highlighting potential gaps in monitoring indexed links.

- The cost per indexed link falls between $0.50 and $1.00, factoring into the budget for link-building campaigns.

- Resource requirements demand 2-4 hours of daily attention to maintain indexing processes effectively.

How can automated indexing tools solve your indexing challenges?

Automated indexing tools resolve backlink indexing challenges by implementing systematic processing algorithms that optimize submission timing and monitoring.

The technology handles thousands of links simultaneously while maintaining consistent quality standards and providing comprehensive analytics.

Performance metrics highlight the substantial improvements of automated tools over manual methods:

| Metric | Manual methods | Automated tools |

|---|---|---|

| Success rate | 30-40% | 85-95% (approximately) |

| Processing time | 4-6 weeks | 14 days |

| Daily capacity | 50-100 links | 5000+ links |

| Error rate | 15-25% | <2% |

| Cost efficiency | $0.50-$1.00/link | $0.25-$0.30/link |

Why are professional indexing tools more effective?

Professional indexing tools can achieve consistent 85-95% success rates by implementing intelligent submission patterns, automated quality control, and strict adherence to search engine guidelines.

The tools process thousands of links daily while maintaining high-quality standards and preventing potential search engine penalties.

Core advantages include the following:

- Intelligent submission algorithms optimize the timing and order of submissions to improve indexing rates.

- Automated quality control filters out low-quality links, ensuring only valuable links are submitted for indexing.

- Real-time status monitoring provides instant updates on the indexing status of submitted links.API integration capabilities allow seamless connections with other SEO tools and platforms.

- Detailed performance analytics offer insights into indexing success rates and submission efficiency.

- Automated retry systems resubmit unindexed links, increasing the chances of successful indexing.

- Penalty prevention measures ensure compliance with search engine guidelines, minimizing the risk of penalties.

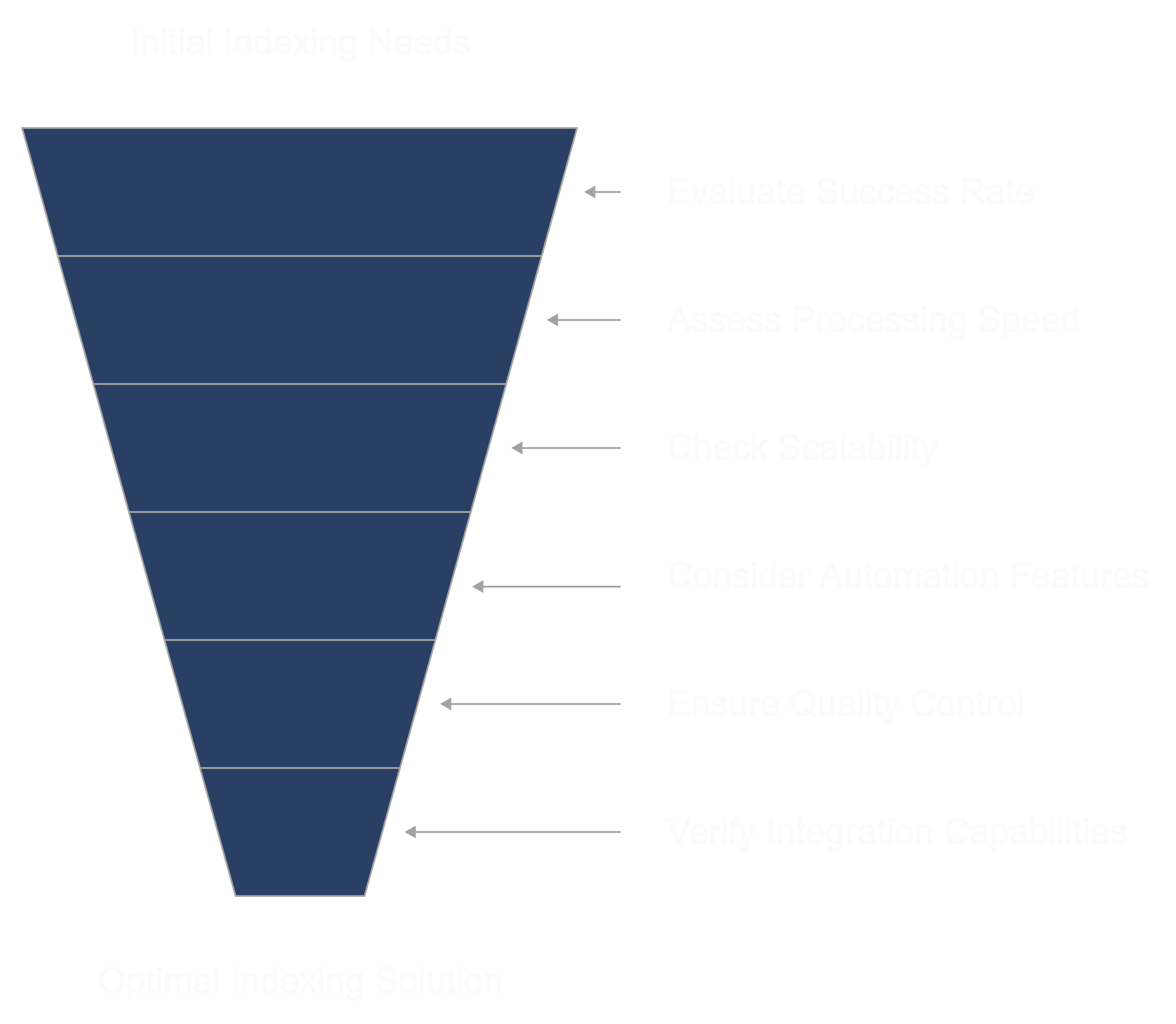

How should you select the right indexing solution?

The right tool can help maximize your link-building efforts by improving indexing success rates, accelerating processing times, and ensuring compliance with search engine guidelines.

Here are some critical elements to look for when selecting an indexing solution:

- Success rate: Look for indexing solutions with a proven track record of high success rates, ideally in the range of 85-95%, to ensure your links are effectively indexed.

- Processing speed: Evaluate how quickly the tool processes links. Faster processing times, ideally within a few days, can improve your link-building ROI by speeding up visibility.

- Scalability: If you need to index a large number of links regularly, choose a solution that can handle high volumes, allowing for thousands of links daily if necessary.

- Automation features: Advanced features like automated submission, retry systems for unindexed links, and real-time status monitoring can streamline the indexing process and save you time.

- Quality control: Opt for a solution that includes automated quality checks to prevent low-quality or irrelevant links from being submitted, as these can harm SEO.

- Integration capabilities: If you use other SEO tools, look for indexing solutions that offer API integration for seamless compatibility and easy workflow integration.

- Cost efficiency: Balance the price per indexed link against the quality of features, success rate, and volume needs. Some tools offer bulk discounts, which may be cost-effective if you have a high link volume.

- Penalty prevention: Select a solution that adheres to search engine guidelines to avoid penalties, ensuring safe indexing without risking search engine violations.

- Support and analytics: Look for tools that offer detailed performance analytics and reliable customer support, which can provide insights into your indexing progress and troubleshoot issues quickly.

How does technical SEO impact your indexing performance?

Technical SEO directly influences search engine indexing performance by establishing core infrastructure elements that determine how effectively search engines can crawl, process, and store website content. Technical SEO implementation leads to 35-45% faster indexing rates and 60% better crawl efficiency.

Sites with optimized technical foundations consistently achieve higher indexing coverage and maintain better search visibility compared to those with poor technical optimization.

What role does your site architecture play in indexing?

Site architecture determines how efficiently search engines discover and process your website’s content through its structural organization and internal linking patterns.

A well-planned architecture enables search engines to find and index pages up to approximately 40% faster by providing clear crawl paths and logical content hierarchies.

Essential site architecture components include:

- Maximum URL depth of 3 levels ensures key content is quickly accessible to search engines.

- Category-based content organization groups related content, improving relevance and crawl efficiency.

- Strategic internal link distribution guides crawlers to important pages, enhancing indexing potential.

- Clear navigation hierarchy provides an intuitive structure for users and search engines alike.

- Clean URL parameter structure prevents duplicate content issues and streamlines indexing.

- XML sitemap integration helps search engines discover and prioritize pages more effectively.

Why is page loading speed important for indexing?

Page loading speed significantly influences how search engines crawl and index websites. Faster-loading pages are often crawled more frequently and indexed more efficiently, leading to improved search engine visibility.

Key speed optimization factors that influence indexing include:

- Server response time: Aim for a server response time under 200 milliseconds to ensure prompt delivery of page content.

- Time to first byte (TTFB): Maintain a TTFB below 500 milliseconds to reduce latency and improve user experience.

- Image size optimization: Compress and resize images to decrease load times and conserve bandwidth.

- Resource minification: Minify CSS, JavaScript, and HTML files to reduce file sizes and accelerate page rendering.

- Browser cache configuration: Leverage browser caching to store static resources locally, reducing the need for repeated downloads.

- Content compression: Implement Gzip or Brotli compression to shrink file sizes and expedite data transfer.

- Content Delivery Network (CDN) implementation: Utilize a CDN to distribute content across multiple servers, ensuring faster access for users worldwide.

Which HTTP status codes will affect your indexing?

HTTP status codes directly control how search engines handle your pages during the indexing process by signaling content accessibility and required crawler actions.

Proper status code implementation can improve crawl efficiency drastically. Critical status codes and their indexing impact include:

| Status code | Impact on indexing | Recommended action |

|---|---|---|

| 200 | Pages are indexed normally | Monitor for consistency |

| 301 | Transfers 90-99% of link equity | Use for permanent redirects |

| 302 | Passes limited link equity | Avoid for permanent changes |

| 404 | Removes pages from index | Fix or redirect broken URLs |

| 410 | Permanent content removal | Use for intentionally removed content |

| 500 | Blocks indexing completely | Fix server errors immediately |

| 503 | Temporary indexing pause | Use during maintenance only |

How do your robots.txt directives impact indexing?

Robots.txt directives guide search engine crawlers by defining which content should be processed and indexed.

Proper robots.txt configuration can improve crawl efficiency while preventing the waste of crawl budget on non-essential pages. Essential robots.txt considerations include:

- Directory-specific crawl rules allow control over which folders or sections of the site are accessible to crawlers.

- Crawl rate specifications manage the frequency of crawler requests, reducing server load when necessary.

- Sitemap location declarations help search engines find all important pages quickly by pointing them to the sitemap.

- User agent targeting rules define custom directives for different search engine crawlers, optimizing content visibility per crawler.

- Parameter handling directives prevent indexing of duplicate or non-canonical URLs by restricting unnecessary URL parameters.

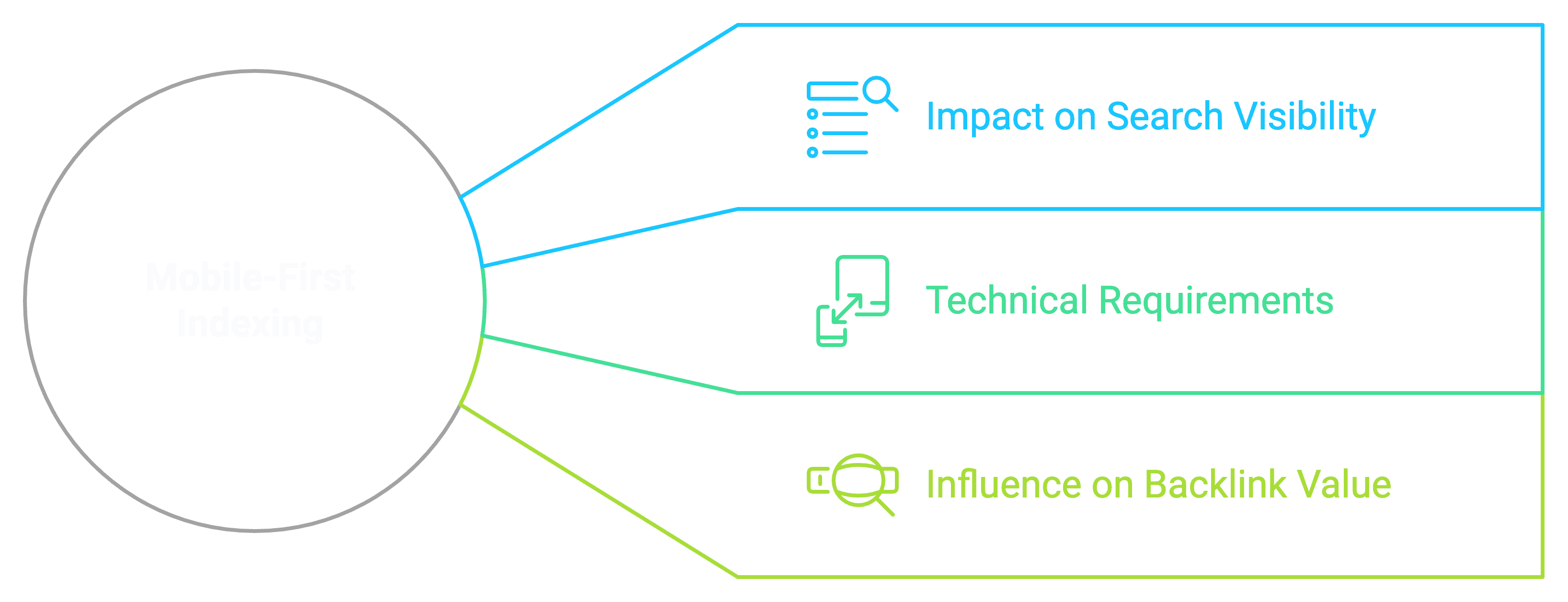

Why is mobile-first indexing important for your site?

Mobile-first indexing is essential as it prioritizes your site’s mobile version for ranking, impacting search visibility, crawl efficiency, and user experience. With mobile optimization, sites often see higher conversion rates, reduced bounce rates, and improved engagement metrics, all of which enhance search performance and audience reach.

How has mobile-first changed the way search engines index?

Mobile-first indexing has transformed search engine behavior by making the mobile version of websites the primary source for content evaluation and ranking decisions.

Search engines now analyze mobile-specific metrics like touch target sizes, viewport configurations, and responsive design elements to determine indexing priority.

Some mobile indexing performance metrics include:

- Page load speed enhances crawl efficiency and indexing performance.

- Mobile responsiveness supports higher crawl rates with mobile-first indexing.

- Content parity between desktop and mobile versions helps maintain consistent rankings.

- Core Web Vitals improve user experience, positively impacting search visibility.

What requirements must you meet for mobile-first indexing?

For mobile-first indexing, meeting specific technical standards ensures that your site is equally accessible, fast, and user-friendly on mobile devices as it is on desktops.

This alignment helps search engines effectively crawl, index, and rank mobile content, optimizing visibility and user experience. Essential mobile-first requirements are mentioned below:

- Content parity across devices guarantees that all essential content appears on both desktop and mobile.

- Mobile-optimized images under 2MB improve loading speeds and user experience on smaller screens.

- Responsive design implementation adapts content layout smoothly across various screen sizes.

- Touch-friendly navigation with clickable targets of at least 44x44px ensures usability on touchscreens.

- Sub-3-second loading times enhance mobile engagement and reduce bounce rates.

- Properly configured viewport settings provide an optimized view across all mobile devices.

- Mobile-friendly structured data ensures accurate content interpretation by search engines on mobile.

- Identical meta tags on all versions maintain SEO consistency and prevent ranking discrepancies.

How does mobile-first impact your backlink value?

Mobile-first indexing influences backlink value by evaluating link accessibility and functionality on mobile devices as primary ranking factors.

Links must be easily tappable, visible without horizontal scrolling, and surrounded by relevant mobile-optimized content to maintain their full SEO value.

Some mobile backlink optimization guidelines are:

- Maintain a minimum 16px font size for link text to improve readability on mobile screens.

- Ensure touch targets are at least 44x44px to make links easily tappable on smaller devices.Keep link spacing at least 8px apart to prevent accidental clicks and improve navigation.

- Implement consistent anchor text across versions for clarity and SEO alignment.

- Position links within viewable content areas to ensure visibility without scrolling.

- Verify mobile rendering of linked pages to confirm links function as expected on mobile.

- Monitor mobile accessibility scores to identify and fix issues affecting link usability.

Why are XML sitemaps essential for your indexing success?

XML sitemaps are essential tools that increase indexing success rates by providing search engines with structured roadmaps of website content. These machine-readable files act as direct communication channels with search engines, helping them understand site architecture and prioritize content for indexing.

Through automated sitemap submission via API integration, we’ve observed significantly faster crawling and indexing of new and updated content across thousands of client websites.

What should you include in an effective sitemap?

An effective sitemap must include all important URLs along with three key metadata elements: last modification date, change frequency, and priority level.

Priority and update frequency guidelines help search engines focus on important pages, with frequent updates to key areas like the homepage and less frequent checks on archive content.

The following essential pages require inclusion:

- Homepage and primary landing pages

- Product and category pages

- Blog posts and articles

- Backlink target pages

- Important media assets (images, videos)

When and how should you update your sitemaps?

Sitemap updates should occur automatically whenever significant content changes happen on your website.

Automated sitemap generation delivers approximately 45% faster indexing compared to manual updates.

For optimal results, implement an automated sitemap system that:

- Monitors content changes continuously to ensure all updates are captured in the sitemap.

- Creates new sitemap entries within minutes for quick reflection of new or updated content.

- Compresses sitemaps over 10MB to reduce file size and enhance loading efficiency.

- Submits updates via API to search engines, ensuring changes are promptly recognized.

- Validates sitemap formatting to meet search engine requirements and avoid indexing errors.

- Tracks processing status to confirm that search engines successfully receive and process updates.

- Generates performance reports to provide insights into indexing efficiency and sitemap effectiveness.

What sitemap implementation mistakes should you avoid?

Common sitemap mistakes that harm indexing performance include non-canonical URLs, exceeding size limits, and including blocked content.

Regular sitemap audits using Google Search Console combined with automated validation checks help prevent these issues.

These critical errors significantly impact indexing:

- Including URLs blocked by robots.txt

- Mixing different content types

- Using incorrect date formats

- Failing to compress large sitemaps

- Breaking XML formatting rules

- Including non-200 status code URLs

- Missing last modification dates

- Exceeding 50,000 URL limit per file

- Including noindex pages

How can you monitor and optimize your indexing performance?

Monitoring and optimizing indexing performance requires a systematic approach combining data analytics, diagnostic tools, and proven optimization methods. Through Backlink Indexing Tool’s extensive experience managing millions of backlinks, we’ve identified the most effective monitoring and optimization techniques.

Which tools and metrics should you use to track indexing?

The most effective tools for tracking indexing performance include Google Search Console, specialized log file analyzers, and professional indexing platforms like Backlink Indexing Tool.

These tools provide comprehensive data about your indexing status and performance.

Here are the essential metrics you should monitor:

| Metric category | Key metrics | Importance |

|---|---|---|

| Coverage metrics | – Indexed vs submitted pages – Indexing errors – Excluded pages – Valid pages ratio | Critical for understanding overall indexing health |

| Crawl data | – Daily crawl rate – Pages crawled per day – Crawl budget usage – Server response times | Essential for optimizing crawl efficiency |

| Backlink metrics | – Success rate – Average indexing time – Failed attempts – Authority scores | Crucial for backlink performance |

How can you identify and fix common indexing issues?

Common indexing issues can be identified through systematic analysis of server logs, Search Console data, and specialized indexing tool reports.

Indexing problems stem from technical issues and content quality. Here are the primary issues and their solutions:

| Issue Type | Specific Problem | Solution |

|---|---|---|

| Technical Problems | Server errors | Fix by optimizing response times |

| Robots.txt mistakes | Adjust directives | |

| Duplicate content | Implement canonical tags | |

| Broken links | Redirect or update URLs | |

| Content Issues | Low quality pages | Improve content depth |

| Thin content | Add valuable information | |

| Duplicate meta data | Create unique descriptions | |

| Poor site structure | Enhance internal linking |

How Can You Improve and Speed Up Your Indexing Process?

To maximize indexing efficiency and ensure search engines quickly find and process your content, it’s essential to follow proven best practices and optimization techniques.

These strategies help create an indexing-friendly setup while also accelerating the process, so your content can reach audiences faster and stay competitive in search results. Here is how you can optimize your indexing process:

| Category | Best practices |

|---|---|

| Technical Optimization | Use clean URL structures |

| Implement HTTPS | |

| Optimize page speed and reduce server response times | |

| Create and submit XML sitemaps | |

| Enable browser caching | |

| Content Strategy | Update content regularly |

| Build quality internal links | |

| Write unique meta data | |

| Ensure mobile compatibility | |

| Crawl Management | Monitor crawl budget and prioritize key pages |

| Remove low-value URLs | |

| Configure robots.txt properly | |

| Distribution Methods | Utilize social platforms to share content |

| Implement RSS feeds to encourage regular crawls | |

| Submit URLs directly to search engines | |

| Build quality backlinks | |

| Tool Implementation | Use Backlink Indexing Tool’s API for automation |

| Enable ping services to notify search engines of updates | |

| Monitor indexing status and track performance metrics | |

| Data Analysis | Measure indexing speed and success rates |

| Analyze crawl patterns and response times |

What are the latest trends shaping search engine indexing?

Search engine indexing is experiencing rapid advancement through AI integration, machine learning implementation, and enhanced processing capabilities. Modern indexing systems now utilize sophisticated algorithms to analyze content context, understand user intent, and process diverse media formats with unprecedented accuracy.

These technological improvements enable search engines to deliver more relevant results while processing information faster than ever before.

How is AI transforming the way search engines index content?

AI transforms search engine indexing by enabling deeper content understanding and automated classification systems.

Machine learning models now analyze contextual relationships, semantic meaning, and user behavior patterns to determine content relevance and indexing priority.

Key AI-driven indexing capabilities include the following:

- Natural Language Processing (NLP) enhances content context understanding, allowing search engines to interpret complex language structures more effectively.

- Visual recognition accelerates image classification and tagging, enabling quicker indexing of visual elements.

- Voice processing improvements boost speech-to-text accuracy, making audio content more accessible for indexing.

- Semantic analysis enhances relationship mapping between content elements, supporting more accurate content retrieval.

- Real-time processing reduces indexing delays, allowing search engines to update their databases more promptly.

Which new indexing features should you be aware of?

The most significant new indexing features focus on granular content processing and multilingual capabilities. Google’s passage indexing now enables search engines to index specific content sections independently, while the MUM update processes content across 75 languages simultaneously.

Recent technical advances are:

- Passage-based indexing allows search engines to index specific sections of content, improving the targeting of relevant information.

- Cross-language processing enables content to be understood and indexed across multiple languages, expanding global reach.

- Real-time indexing processes content almost immediately, ensuring that updates appear quickly in search results.

- Mobile-first evaluation prioritizes mobile-friendly content, aligning with modern user behaviors for improved accessibility.

- Video moment analysis indexes specific video segments, making key moments discoverable in search results.

How are search engine indexing algorithms evolving?

Search engine indexing algorithms are advancing rapidly with machine learning and behavioral analysis capabilities.

They now handle over 3.5 billion daily queries, continually adapting to new content types and user preferences. Leveraging over 200 ranking signals, these algorithms can assess content quality almost instantly upon discovery.

Recent enhancements include improved spam detection accuracy, faster duplicate content identification, precise content quality assessments, and significantly reduced processing speeds.

With updates occurring every 15 minutes, these technological advancements allow search engines to maintain fresh, relevant indexes while filtering low-quality content efficiently.

For website owners, this means quicker content discovery and more accurate search rankings.

How should you build your indexing strategy?

An effective indexing strategy requires careful planning and systematic implementation to maximize backlink indexing success rates. By combining automated tools with strategic prioritization, you can achieve optimal indexing performance while efficiently managing resources. Our data shows that well-structured indexing strategies typically result in 85-95% successful indexation rates within 14 days.

What should your comprehensive indexing plan include?

A comprehensive indexing plan includes six essential components that work together to ensure consistent and reliable backlink indexing performance.

These components form the foundation of successful indexing operations and enable systematic tracking of results.

Key components for indexing success are listed below:

- Monitoring system tracks the indexing status of content, ensuring visibility across search engines.

- Submission schedule maintains a consistent flow of content, improving chances of timely indexing.

- Quality assessment filters out low-value links to prioritize high-quality content for indexing.

- Performance tracking measures success metrics, providing insights into indexing effectiveness.

- Automation tools help scale operations, allowing for efficient handling of high volumes of content.

- Contingency planning prepares for failed indexing attempts, ensuring alternative strategies are in place.

How should you prioritize your content for indexing?

To maximize indexing efficiency, prioritize content with the highest SEO impact, focusing on backlinks that deliver substantial value.

An effective prioritization framework for optimal resource allocation. High-priority content, receiving most resources, includes backlinks from high Domain Rating (DR 50+) referring domains, contextually relevant links, and fresh content less than a week old.

Medium-priority content, allocated a smaller resource share, includes DR 30-49 domains, category and product pages, and content up to a month old.

Low-priority content, allocated minimal resources, consists of lower DR domains, non-strategic pages, and older content.

How can you best integrate automated tools into your strategy?

Automated tool integration requires proper setup of API connections and workflow automation to achieve maximum efficiency in your indexing operations.

Our Backlink Indexing Tool API enables streamlined processing with a high automation capability while maintaining high success rates.

Technical implementation requirements:

| Component category | Specific elements |

|---|---|

| API Integration Components | Authentication setup |

| Endpoint configuration | |

| Rate limit management | |

| Error handling protocols | |

| Response processing | |

| Workflow Automation Elements | Submission queuing |

| Status monitoring | |

| Credit management | |

| Report generation | |

| Notification system |

Leave a Reply