Technical SEO for indexing is a critical component of search engine optimization that focuses on enhancing website visibility and accessibility to search engines.

This article explores key elements of technical SEO for indexing, including URL structure, HTTP status codes, robots.txt files, XML sitemaps, and schema markup.

We’ll figure out best practices, common pitfalls, and emerging trends in technical SEO for indexing, offering actionable insights for SEO professionals and website owners to improve search engine rankings and overall online presence.

What is technical SEO for indexing?

Technical SEO for indexing is the practice of optimizing a website’s technical aspects to enhance its crawlability and indexability by search engines.

It involves implementing specific strategies to ensure search engine bots can easily discover, access, and understand a website’s content, ultimately leading to improved visibility in search results.

This process focuses on the backend elements of a website, addressing issues that prevent search engines from effectively crawling and indexing pages.

By optimizing these technical aspects, websites can improve their chances of appearing in relevant search queries and achieving higher rankings.

How does technical SEO differ from other SEO practices?

Technical SEO differs from other SEO practices by focusing on the website’s infrastructure and backend elements rather than content or off-page factors.

Content SEO focuses on creating relevant content, and off-page SEO handles backlinks.

Technical SEO covers the technical parts that help search engines crawl and index websites.

Key differences between technical SEO and other SEO practices:

| Aspect | Technical SEO | Content SEO | Off-Page SEO |

|---|---|---|---|

| Focus | Backend optimization | Content quality and relevance | External factors |

| Skills required | Technical knowledge | Content creation | Relationship building |

| Implementation | Coding and server-side changes | Writing and editing | Link building and outreach |

| Measurability | Crawl stats and indexation reports | Engagement metrics | Backlink profiles |

| Primary goal | Improve crawlability and indexability | Enhance user experience and relevance | Build authority and trust |

Technical SEO often requires collaboration between SEO professionals and web developers to implement necessary changes and optimizations.

This interdisciplinary approach ensures that a website’s technical foundation supports its overall SEO strategy.

Why is indexing crucial for search engine visibility?

Indexing is crucial for search engine visibility because it directly impacts a website’s ability to appear in search results.

Without proper indexing, even the most well-crafted content will remain invisible to search engines and, consequently, to potential visitors.

Benefits of effective indexing include:

- Improved search rankings: Properly indexed pages have a better chance of ranking for relevant queries.

- Faster content discovery: Search engines can find and crawl new or updated content more quickly.

- Increased organic traffic: Higher visibility in search results leads to more potential visitors.

- Better user experience: Accurate indexing ensures users find the most relevant and up-to-date content.

- Enhanced site structure understanding: Search engines can better comprehend the hierarchy and relationships between pages.

To illustrate the importance of indexing, consider that Google processes over 3.5 billion searches per day.

If a website’s pages aren’t indexed, they won’t appear in any of these searches, regardless of their relevance or quality.

What are the key components of technical SEO for indexing?

The key components of technical SEO work together to create an optimal environment for search engines to discover, understand, and index web pages effectively.

Key components of technical SEO for indexing:

- URL structure optimization: Creating clean, descriptive, and user-friendly URLs

- HTTP status code management: Properly handling 3xx redirects, 404 errors, and other status codes

- Robots.txt configuration: Guiding search engine crawlers on which pages to access or avoid

- XML sitemap implementation: Providing a roadmap of important pages for search engines

- Schema markup and structured data: Enhancing search engine understanding of page content

- Page speed optimization: Ensuring fast load times for better crawling and user experience

- Mobile-friendliness: Optimizing for mobile devices to align with mobile-first indexing

- Internal linking structure: Creating a logical hierarchy and facilitating efficient crawling

- Canonical tags: Addressing duplicate content issues and consolidating link equity

- Hreflang tags: Specifying language and regional targeting for international websites

To maximize the effectiveness of technical SEO for indexing, it’s essential to regularly audit and optimize these components.

Tools like Google Search Console, Screaming Frog, and various SEO platforms can help identify and address technical issues that may be hindering proper indexing and visibility in search results.

How does URL structure impact indexing?

A clear and well-organized URL structure plays a pivotal role in ensuring that search engines can crawl and index a website efficiently. Poorly structured URLs may confuse crawlers, leading to incomplete indexing or lower rankings.

What makes a URL structure search engine friendly?

A search engine-friendly URL is simple, descriptive, and uses keywords relevant to the page content.

Here’s how to create optimal URLs:

- Use hyphens instead of underscores to separate words.

- Avoid using dynamic parameters unless necessary.

- Incorporate target keywords in the URL.

- Keep URLs short and easy to read, enhancing both user experience and search engine readability.

For example, a good URL might look like this: www.example.com/seo-tips-for-beginners, while a poorly structured URL could be: www.example.com/?p=456.

How can URL parameters affect indexing?

URL parameters can lead to multiple versions of the same page, which dilutes SEO by causing duplicate content, wasting crawl budget, splitting link equity, and complicating search engine indexing.

Components like session IDs, tracking parameters, sorting, filtering, and pagination can create variations that fragment rankings unless managed properly with canonical tags or URL parameter handling in an SEO tool.

To mitigate this, configure canonical tags to tell search engines which version of a page should be indexed, or use URL parameter settings in Google Search Console.

What are the best practices for creating indexable URLs?

Indexable URLs boost visibility due to how they are designed. Best practices for creating indexable URLs:

- Consistency: Ensure your URL structure is consistent across the site.

- Use lowercase: URLs are case-sensitive, so using all lowercase letters reduces the risk of duplication.

- Avoid special characters: Stick to hyphens and avoid underscores, spaces, or other symbols.

- Use HTTPS: Secure URLs (using HTTPS) are more likely to be trusted and indexed by Google.

What role do HTTP status codes play in indexing?

HTTP status codes communicate the state of a web page to search engines during crawling. The most critical codes to monitor include 200, 301, 404, and 500, each of which informs search engines whether a page is accessible, redirected, missing, or facing server issues.

Which HTTP status codes are most important for SEO?

Status codes help identify different status of the SEO management cycle. Some prominent codes are:

- 200 OK: Confirms that the page is accessible and should be crawled.

- 301 Moved Permanently: Redirects a page’s ranking power to a new URL.

- 404 Not Found: Signals a missing page, which can hurt crawl efficiency and lead to wasted crawl budget.

- 500 Internal Server Error: Indicates a server issue that prevents crawling, potentially causing search engines to de-prioritize a site.

Properly managing these status codes helps search engines understand how to treat each page on the site.

How do 3xx redirects affect indexing?

3xx redirects, especially 301 redirects, play a crucial role in transferring ranking signals from one URL to another.

However, incorrect use of redirects can lead to problems such as:

- Chain redirects: Multiple redirects can slow down crawling and hurt user experience.

- Broken redirects: Non-functional redirects can result in dead-end pages, negatively affecting SEO.

Always ensure redirects point to relevant pages to preserve link equity and ensure smooth crawling.

What should you do about 404 errors for indexing purposes?

While 404 errors are inevitable, managing them effectively is essential. Too many 404s can waste your crawl budget and damage SEO efforts.

Best practices for 404 management:

- Redirect outdated or broken pages to relevant content using a 301 redirect.

- Implement a custom 404 page with a helpful message or navigation options to keep users engaged.

- Regularly monitor for 404 errors using tools like Google Search Console or Screaming Frog.

How does robots.txt influence link indexing?

A robots.txt file instructs search engines on which parts of a site they should or shouldn’t crawl. This file is crucial for optimizing your crawl budget by guiding crawlers away from non-essential pages, like thank-you pages or login screens.

What is the purpose of a robots.txt file?

A robots.txt file directs search engines on how to interact with a website, helping control crawler access to specific areas.

It manages crawl budget by blocking non-essential pages like login areas or duplicate content, allowing search engines to prioritize important pages.

It also prevents sensitive or non-public pages, such as admin panels and staging sites, from being accessed by search engines.

This file supports site organization, guiding search engines to relevant content while improving indexing efficiency.

However, for full indexing control, (noindex) tags are recommended, as robots.txt cannot prevent indexing if pages are linked elsewhere.

How can robots.txt be used to control indexing?

Technically, robots.txt is used for specific reasons like fixing security issues or managing SEO relevant concerns.

By specifying certain rules in robots.txt, you can:

- Block access to duplicate content or non-SEO-friendly pages.

- Prevent crawlers from indexing sensitive pages, ensuring only high-value content is indexed.

For example, to block all crawlers from accessing a specific folder, use:

-> User-agent: *

-> Disallow: /private-directory/

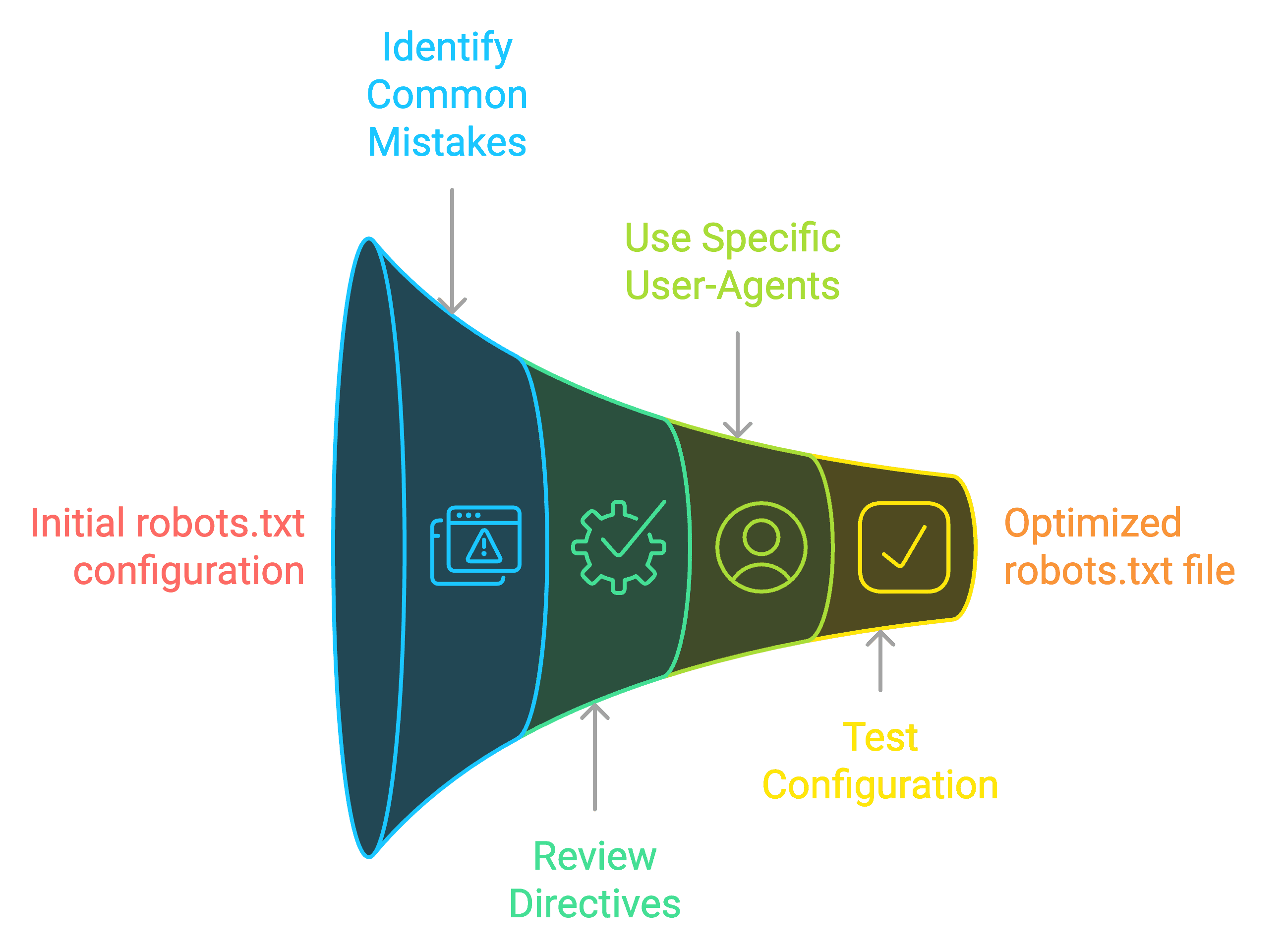

What are common mistakes to avoid in robots.txt configuration?

Common mistakes in robots.txt configuration can significantly impact link indexing.

These errors include blocking essential resources, using incorrect syntax, and over-restricting search engine access.

A frequent oversight is accidentally disallowing all crawlers by using the wildcard (*) without proper consideration.

Another critical mistake is blocking CSS and JavaScript files, which hinders search engines’ ability to render and understand pages correctly.

To avoid these issues and optimize your robots.txt file for effective indexing:

- Review and update your robots.txt file periodically

- Double-check all directives for accuracy

- Use specific user-agent declarations when necessary

- Regularly test your robots.txt file using Google’s robots.txt Tester tool

Why are XML sitemaps important for faster indexing?

An XML sitemap is a file that lists all of a site’s URLs and provides additional information, such as when a page was last updated. This roadmap helps search engines discover and index content faster, especially on large or complex sites.

What information should be included in an XML sitemap?

The key elements to include in an XML sitemap are:

- URL location (loc): The URL of the page.

- Last modified date (lastmod): The most recent update to the page.

- Change frequency (changefreq): How often the page is expected to change.

- Priority (priority): Relative importance of the page.

These elements give search engines a clear overview of the site’s structure and the most crucial pages for indexing.

How often should XML sitemaps be updated?

The frequency of updates to an XML sitemap depends on how often the content of the website changes. For example:

- Daily updates: For news sites or e-commerce sites with changing inventory.

- Weekly updates: For blogs or content-rich websites.

- Monthly updates: For static sites with infrequent changes.

Regularly updating the sitemap ensures search engines have access to the most up-to-date content.

What are the benefits of submitting sitemaps to search engines?

Submitting an XML sitemap through tools like Google Search Console offers several benefits:

- Faster indexing of new content: New or updated pages are discovered more quickly.

- Improved crawl efficiency: Large sites can ensure deeper pages are crawled.

- Better site structure understanding: Search engines can better comprehend the website’s hierarchy and relationships between pages, which enhances the indexing process and leads to improved visibility in search results.

How does schema markup enhance indexing?

Schema markup is a type of structured data added to a website’s HTML that helps search engines understand the content on a deeper level. This added context can improve how a page is indexed and increase the likelihood of appearing in rich search results like snippets andrich snippets and knowledge panels.

What types of schema markup are most beneficial for indexing?

Several types of schema markup are particularly beneficial for SEO and indexing, including:

- Organization schema: Provides details about your business or organization.

- Product schema: Helps e-commerce pages highlight product details in search results.

- FAQ schema: Optimizes frequently asked questions to appear in search snippets.

- Article schema: Improves the chances of blog posts and news articles being featured in rich snippets.

Implementing the right schema for your site improves search engine understanding and enhances your visibility in search results.

How does structured data improve search engine understanding?

By clearly defining content types, structured data allows search engines to better interpret the context and relationships within your content.

For example, using review schema tells search engines that certain content represents a customer review, which can then appear as a star rating in search results.

This added level of clarity ensures more accurate indexing, which improves search performance.

What tools can help implement and validate schema markup?

Several tools can assist in implementing and validating schema markup:

- Google’s Structured Data Markup Helper: Provides an easy way to add structured data to your site.

- Schema.org: Offers detailed guidelines and examples for various types of markup.

- Google Rich Results Test: Validates whether your structured data is properly implemented and eligible for rich results.

- JSON-LD Generator Tools: Help generate schema code in JSON-LD format for easy integration into your HTML.

Using these tools ensures that your schema is correctly formatted and functional for indexing.

What are the steps to implement technical SEO for indexing?

Implementing technical SEO for indexing involves a systematic approach to ensure that every element of your website is optimized for crawling and indexing.

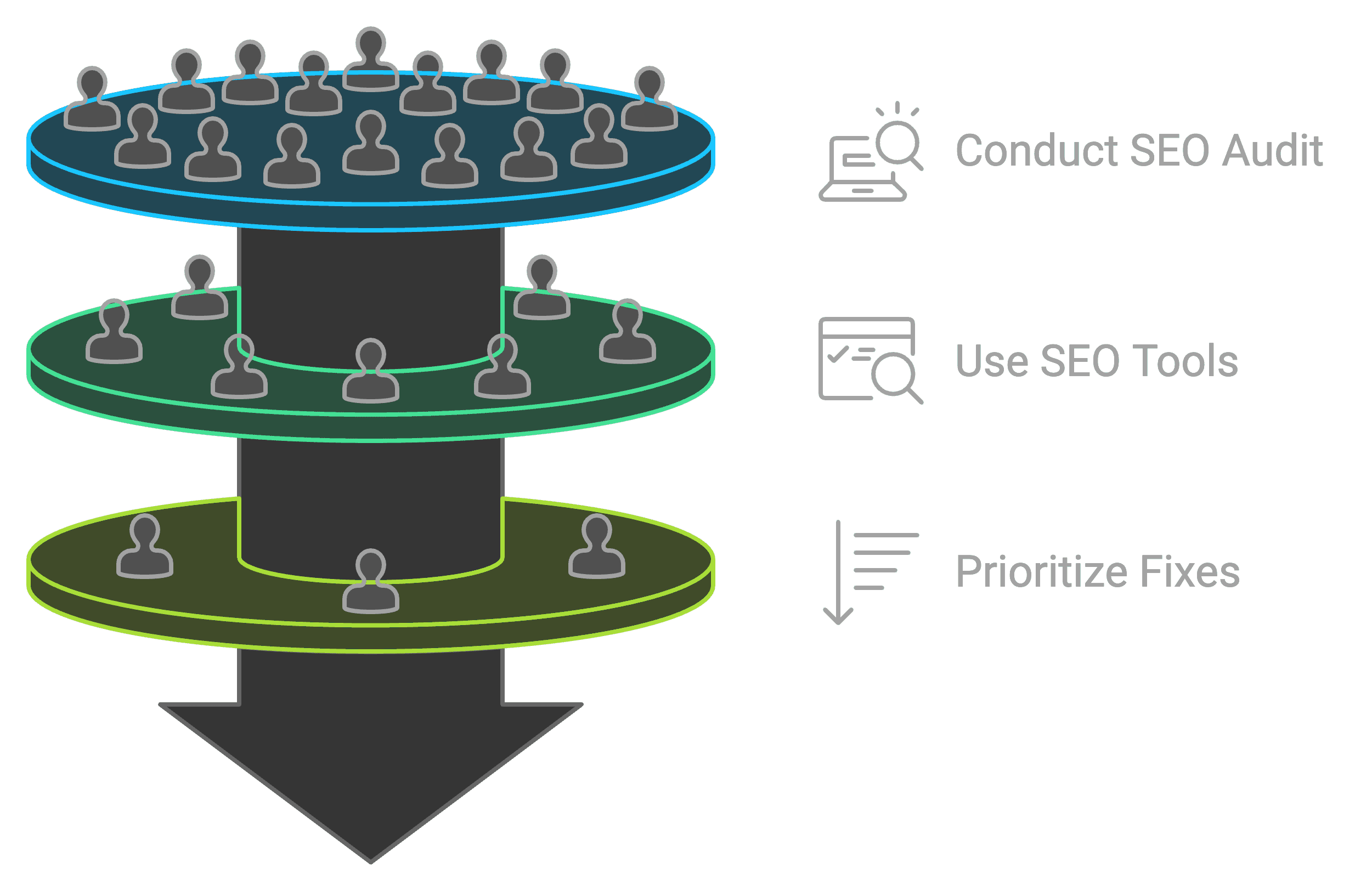

How do you conduct a technical SEO audit for indexing issues?

A technical SEO audit is the first step in identifying indexing issues. This involves:

- Crawling your site using tools like Screaming Frog or DeepCrawl to identify issues like broken links or incorrect redirects.

- Checking Google Search Console for crawl errors, indexing status, and sitemap issues.

- Evaluating robots.txt and meta tags to ensure key pages aren’t inadvertently blocked from indexing.

By systematically addressing these areas, you can ensure search engines efficiently index your website.

What tools are essential for technical SEO analysis?

Essential tools for conducting a technical SEO analysis include:

- Google Search Console: For monitoring crawl errors, indexing status, and submitting sitemaps.

- Screaming Frog: To identify crawl issues like broken links, duplicate content, or orphan pages.

- Ahrefs or SEMrush: For tracking overall SEO health, backlinks, and organic performance.

- GTmetrix: To evaluate and improve page speed.

These tools help SEO professionals identify, monitor, and resolve indexing issues effectively.

How can you prioritize technical SEO fixes for maximum impact?

Certain technical issues take precedence over others. To prioritize fixes:

- Address critical crawl errors first, such as 404 errors and broken redirects.

- Improve mobile usability and site speed to align with Google’s mobile-first indexing and Core Web Vitals.

- Ensure internal linking structure supports efficient crawling and indexing.

- Implement structured data on high-traffic pages for enhanced indexing visibility.

Focusing on these areas ensures a strong foundation for technical SEO and better overall indexing performance.

How to create a comprehensive technical SEO report for indexing?

A technical SEO report should summarize key findings related to your website’s indexing performance and outline recommendations for improvement.

What key metrics should be included in a technical SEO report?

Improved functionality of the SEO fundamentals have various aspects. Key metrics to include:

- Indexed pages count: Indicates how much of your content is indexed by search engines.

- Crawl errors: Highlight issues like broken links or inaccessible pages.

- Page load speed: Affects user experience and indexing.

- Mobile-friendliness: Ensures the site is optimized for mobile-first indexing.

- Core Web Vitals: Performance metrics like LCP and FID that impact search rankings.

Tracking these metrics helps assess how well search engines are indexing your site and pinpoint areas for improvement.

How can you visualize indexing data effectively?

Visualizing data using charts and graphs helps make the report more understandable:

- Line charts can show the crawl rate or number of indexed pages over time.

- Pie charts can illustrate the distribution of indexed vs. non-indexed pages.

- Heatmaps help identify areas of the website with crawl issues.

Using visualization tools makes complex data easier to interpret and actionable for stakeholders.

What recommendations should be highlighted in the report?

Various factors can be explored in the reporting. But specific recommendations should focus on:

- Fixing crawl errors: Ensure all critical pages are indexable.

- Optimizing sitemaps and robots.txt: Provide clear guidance for search engines.

- Improving site speed: Address slow-loading pages.

- Implementing structured data: To improve the visibility of key content.

Each recommendation should include an estimated impact on indexing performance and SEO health.

What are the latest technical SEO trends for indexing in 2024?

As search engines evolve, technical SEO must adapt to new standards and algorithms. In 2024, there are several key trends that will shape technical SEO for indexing.

How has mobile-first indexing evolved?

Google’s mobile-first indexing means that Google primarily uses the mobile version of a website for indexing and ranking. As mobile usage continues to rise, it’s more important than ever to ensure that:

- Pages load quickly on mobile devices.

- Content is easily accessible on smaller screens.

- User experience remains seamless across devices.

Websites that ignore mobile optimization risk poor indexing and lower rankings.

What impact does Core Web Vitals have on indexing?

Core Web Vitals—a set of performance metrics that includes Largest Contentful Paint (LCP), First Input Delay (FID), and Cumulative Layout Shift (CLS)—have a direct impact on both indexing and ranking. Pages that fail to meet Core Web Vitals thresholds may experience delayed indexing and lower rankings.

To stay competitive, websites must focus on optimizing:

- Page speed: Ensure that the main content loads quickly.

- Interactivity: Reduce delays in user interactions.

- Visual stability: Minimize unexpected shifts in layout.

These metrics not only improve user experience but also enhance search engine indexing.

How are voice search and AI affecting technical SEO strategies?

With the rise of voice search and AI-driven algorithms like BERT and MUM, SEO strategies must adapt to more conversational queries and better understanding of context.

Voice search requires optimizing for long-tail keywords and natural language patterns, while AI advancements mean that search engines can now better understand user intent. As a result, websites must focus on providing clear, comprehensive content that directly answers user queries to improve indexing and ranking.

Does technical SEO for indexing require coding skills?

While basic coding knowledge is helpful, technical SEO doesn’t always require in-depth programming skills. Many tasks can be handled using tools and plugins, though collaborating with developers is beneficial for more complex issues.

What are the basic coding concepts useful for technical SEO?

Coding skills can boost your SEO skills. Basic concepts that are useful include:

- HTML: To understand on-page elements and structured data.

- CSS/JavaScript: For optimizing page load and render times.

- HTTP status codes: To handle redirects and error pages correctly.

These concepts are foundational for implementing and troubleshooting technical SEO tasks.

Which technical SEO tasks can be performed without coding?

Many technical SEO tasks can be performed using SEO tools without coding:

- Submitting sitemaps: Tools like Google Search Console offer simple submission methods.

- Checking crawl errors: Tools like Screaming Frog provide reports without needing manual coding.

- Optimizing metadata: Platforms like WordPress allow easy adjustments to meta tags through plugins.

These tasks ensure SEO professionals can manage most technical SEO optimizations without relying heavily on coding.

How can SEO professionals collaborate with developers for indexing optimization?

For more complex technical tasks—such as improving site speed, fixing complex redirects, or implementing advanced schema—SEO professionals can work closely with developers. Effective collaboration involves:

- Clearly communicating SEO priorities.

- Providing detailed technical specifications for changes.

- Regularly monitoring the impact of development changes on indexing.

This teamwork ensures technical issues are resolved efficiently, leading to better indexing outcomes.

How can Backlink Indexing Tool enhance your technical SEO strategy?

Backlink Indexing Tool offers several features that directly enhance the effectiveness of your technical SEO strategy by ensuring your backlinks are indexed faster and more reliably, which is critical to improving rankings.

What unique features does Backlink Indexing Tool offer for improving indexation?

Backlink Indexing Tool provides several unique features:

- No Google Search Console requirement: Users can submit links for indexing without needing Google Search Console.

- Credit refund system: Automatic refunds for unindexed links.

- Pay-as-you-go model: Offers flexibility in usage, allowing SEO professionals to manage costs effectively.

- Detailed reporting: Comprehensive reports help track the progress of backlinks being indexed.

These features make it easier to ensure that your backlinks are recognized by search engines, improving your overall SEO performance.

How does Backlink Indexing Tool’s high indexing rate benefit SEO efforts?

The high indexing rate of Backlink Indexing Tool offers SEO professionals a distinct advantage.

A higher indexing rate means a larger proportion of backlinks will be recognized and factored into the site’s ranking algorithm faster.

- Faster recognition of backlinks: This ensures that the benefits of link-building campaigns, such as increased domain authority and improved search rankings, are realized more quickly.

- Improved search visibility: Indexed backlinks contribute directly to higher rankings for targeted keywords, driving more organic traffic.

Can Backlink Indexing Tool integrate with existing SEO workflows?

Backlink indexing tool can seamlessly integrate with most existing SEO workflows. These tools are designed to enhance link-building efforts by ensuring that search engines quickly recognize and index new backlinks.

Integrating a backlink indexing tool involves the following key steps:

- Link Monitoring and Submission: When integrated into your workflow, they can automatically submit newly acquired links to search engines for indexing. This saves time that would otherwise be spent on manual submissions.

- Automation with SEO Tools: Most backlink indexing tools can integrate with platforms like Ahrefs, SEMrush, or Moz, allowing you to export data directly or set up automated submissions.

- API Access: For instance, if your SEO team uses custom scripts or dashboards to manage backlinks, the tool’s API can automate the indexing process directly from your dashboard.

- Scalability: By integrating these tools into your workflow, you can handle the indexing of thousands of backlinks at once without overloading your SEO team.

- Performance Tracking: By integrating these tools into your reporting workflow, you can monitor which backlinks have been indexed and adjust your strategy accordingly. This data can also be valuable when combined with analytics tools to assess overall link-building performance.

What is the process of using Backlink Indexing Tool for technical SEO?

Backlink Indexing Tool streamlines the process of getting backlinks indexed by search engines like Google, providing an efficient solution for SEO professionals who need to ensure their backlinks are recognized and factored into ranking algorithms.

The process involves three primary steps: link submission, progress tracking through reports, and credit management for unindexed links.

How do you submit links for indexing through Backlink Indexing Tool?

The link submission process with Backlink Indexing Tool is designed for simplicity and scalability:

- Login and access dashboard: Users start by logging into the Backlink Indexing Tool dashboard, which provides access to link submission features.

- Manual or bulk submission: Users can manually enter individual URLs or use the bulk upload feature to submit up to 10,000 links at once via a CSV file. This bulk submission capability is particularly beneficial for large-scale SEO campaigns, allowing users to process a high volume of links efficiently.

- Select indexing speed: Users can choose between normal indexing or priority indexing. Priority indexing consumes more credits but speeds up the indexing process, which is useful for time-sensitive campaigns.

- Confirmation and processing: After submission, the Backlink Indexing Tool immediately begins processing the URLs, using its proprietary technology to encourage search engines to crawl and index the links.

This straightforward process ensures that SEO professionals can manage link submissions quickly and effectively, reducing the manual effort typically associated with link indexing.

What reports does Backlink Indexing Tool provide for tracking indexing progress?

Backlink Indexing Tool provides comprehensive reports to help users track the status and performance of their submitted backlinks:

- Indexing status report: After 14 days of processing, users receive a detailed report that shows the indexing status of each submitted URL. This report includes:

- Indexed: URLs that have been successfully indexed by search engines.

- Pending: URLs that are still in the process of being indexed.

- Not Indexed: URLs that have not been indexed by the end of the processing period.

- Time-to-Index metrics: The report provides data on how quickly the submitted links were indexed after submission, helping users understand the overall efficiency of the tool.

- Historical performance: Users can compare the current campaign’s indexing success with previous submissions, allowing for performance trend analysis over time.

These reports are available in both visual formats (charts and graphs) and downloadable CSV files, making it easy for users to integrate the data into their SEO dashboards and analyze the results effectively.

How does the credit refund system work for unindexed links?

One of the key features of Backlink Indexing Tool is its automatic credit refund system. Users only pay for successfully indexed links, ensuring cost efficiency. Here’s how the system works:

- Credit deduction upon submission: When users submit links for indexing, credits are immediately deducted from their account based on the number of links submitted.

- 14-Day processing period: Backlink Indexing Tool processes the links over a 14-day period, attempting to get each one indexed.

- Refund for unindexed links: After the 14 days, any links that remain unindexed are eligible for a credit refund. The corresponding credits for those links are returned to the user’s account automatically.

This system ensures that users only pay for the results they achieve, reducing the financial risk associated with unindexed links.

How does Backlink Indexing Tool ensure safe and effective indexing?

Backlink Indexing Tool uses a range of techniques and safeguards to ensure that backlinks are indexed effectively while adhering to search engine guidelines. The tool is designed to avoid triggering penalties and to maximize indexing success.

What methods does Backlink Indexing Tool use to avoid link spam penalties?

To prevent penalties from search engines, Backlink Indexing Tool employs several white-hat strategies:

- Gradual link submission: Links are submitted to search engines in staggered batches, mimicking natural link acquisition patterns. This prevents the appearance of sudden, unnatural link spikes that could be flagged as suspicious by search engines.

- Diverse indexing signals: The tool uses a variety of indexing methods, including social signals and content syndication, to make the links appear more natural. This helps avoid detection by spam filters.

- Compliance with Robots.txt: The tool respects the robots.txt file of each website, ensuring it doesn’t attempt to index links that are blocked by the website owner, further maintaining ethical indexing practices.

How does Backlink Indexing Tool compare to manual indexing methods?

Backlink Indexing Tool provides distinct advantages over manual indexing in terms of speed, efficiency, and usability.

- Time-Saving bulk submission: Manual indexing requires submitting URLs individually, often through tools like Google Search Console, which is time-consuming for larger campaigns. In contrast, Backlink Indexing Tool supports high-volume submissions, handling up to 10,000 links at once, making it ideal for large-scale link-building efforts.

- Improved indexing efficiency: While manual methods can yield mixed results, often achieving a 30-50% success rate, Backlink Indexing Tool is designed to outperform standard manual indexing. Though a precise success rate isn’t specified, it aims for higher effectiveness.

- Comprehensive reporting: Manual indexing offers limited visibility, making it difficult to track progress. Backlink Indexing Tool provides detailed reports and metrics, giving users complete transparency over their campaign’s indexing progress and success.

What safeguards are in place to protect your SEO efforts?

Backlink Indexing Tool implements several safeguards to ensure that SEO efforts are protected:

- Rate limiting: The tool prevents excessive submission rates by limiting the number of links submitted at once. This helps avoid drawing unwanted attention from search engines.

- IP rotation: Backlink Indexing Tool uses a rotating pool of IP addresses to submit links, ensuring that the submissions appear natural and spread out over different geographical regions.

- Secure data handling: All user data, including submitted URLs and indexing progress reports, is encrypted and stored securely, protecting against unauthorized access.

These safeguards work together to ensure that SEO campaigns remain compliant with search engine guidelines and that users’ backlink profiles are protected from potential penalties.

How Can SEO Professionals Measure the ROI of Backlink Indexing Tool for Their Campaigns?

By improving the speed and reliability of backlink indexing, Backlink Indexing Tool can significantly enhance the return on investment (ROI) for SEO campaigns.

SEO professionals can measure the tool’s impact by tracking key metrics before and after its use:

- Indexing Rates: Compare the percentage of successfully indexed backlinks against previous manual indexing efforts.

- Keyword Ranking Improvements: Monitor shifts in targeted keyword positions, especially those supported by newly indexed backlinks.

- Traffic Growth: Assess increases in organic traffic as backlinks enhance the website’s visibility.

- Conversion Rates: Track improvements in goal completions or sales that often follow higher rankings and increased traffic.

Leave a Reply